In a recent announcement (February 2016), Twitter has spoken out against the use of their microblogging platform to promote terrorism. They report having suspended over 125,000 profiles for threatening or promoting terrorist acts, primarily related to Islamic State, using manual review and proprietary anti-spam technology.

Twitter remarks: ‘As many experts and other companies have noted, there is no “magic algorithm” for identifying terrorist content on the internet, so global online platforms are forced to make challenging judgement calls based on very limited information and guidance.’

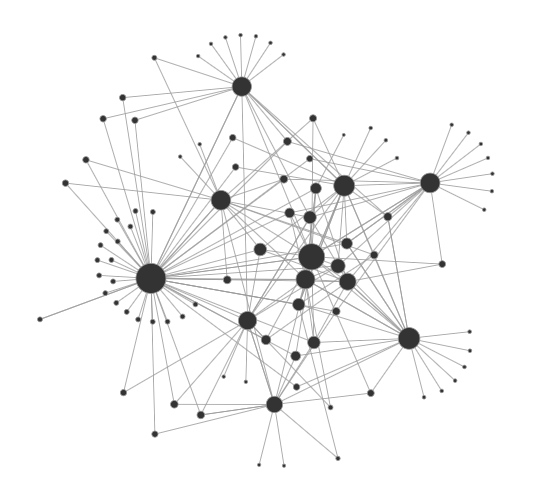

Twitter’s mission is challenging. For every subversive profile suspended, a new profile appears. Profiles that have not yet been suspended then broadcast the existence of the new profile, and so on, in an endless cat-and-mouse game.

Over the course of several terrorism acts that occurred in the past year, Textgain has developed a proof-of-concept that automatically identifies hate speech. We have collected a large amount of subversive tweets that promote hate and terrorism. In parallel, we have collected non-incendiary tweets from journalists, experts, religious leaders, muslimas, etc., that report on the same topic. Using machine learning and text analytics techniques, we then compared both datasets to predict what features (words, word combinations, …) correlate strongly with hate speech. For example, out-of-place words such as kafir (infidel, كافر) and dawla (roughly: temporary state, دولة) combined with words such as dog or rage may raise the alarm bell.

Instead of using a fixed notion of what hate speech looks like, our machine fits itself to the available data as the rhetoric evolves. It is over 80% accurate in lab conditions. However, in the real world, automatically identifying one inflammatory tweet in a million other tweets is very difficult. We must use such tools with caution since they may predict false positives and false negatives alike. But Textgain believes that our technology can be valuable to help manual review of subversive text.

We will discuss and freely share our technology with platforms under distress such as Twitter and with known security agencies. Ask us about it!

Press coverage [text analytics], most recent first:

- ‘UA ontwikkelt detectiesoftware haatboodschappen’, Het Journaal, VRT

- ‘Textgain ontwikkelt haatberichtendetector’, De Standaard

- ‘Antwerpse taaltechnologie legt haatberichten bloot’, Laatste Nieuws

- ‘Deze software spoort online haatberichten op’, De Morgen

- ‘Antwerpse taaltechnologie legt haatberichten bloot’, De Morgen

- ‘Nieuwe software scant haatberichten’, VTM Nieuws

- ‘Vlaamse technologie traceert haatberichten’, Het Nieuwsblad

- ‘Nieuwe technologie filtert snel haatberichten’, Radio 2 (► 0:57:30)

- ‘Nieuwe technologie ontcijfert IS-propaganda’, Gazet Van Antwerpen

- Antwerp researchers identify anonymous internet culprits’, Flanders Today

- Des chercheurs anversois traquent la propagande djihadiste, RTBF

- Antwerps bedrijfje kan verborgen IS-boodschappen opsporen, Gazet Van Antwerpen

- Un algorithme belge pour traquer les djihadistes, L’Echo

- Antwerpse technologie spoort IS-propaganda op, ATV

- Antwerp-developed software to counter IS, Flandersnews.be

- Un logiciel anversois reconnaît la propagande de l’Etat islamique en ligne, RTBF

- Met deze software wil Antwerps bedrijf IS-propaganda opsporen, De Morgen

- Nieuwe technologie uit Antwerpen herkent foto’s van IS-aanhangers, Deredactie.be, VRT

- Phần mềm nhận biết việc tuyên truyền của IS trên mạng, Đầu Báo

- Antwerp start-up’s software detects IS propaganda, Flanders Today

- Nieuwe technologie uit Antwerpen herkent online IS-propaganda, Het Nieuwsblad